Short posts

Sickening to believe this is how stuff works today

“Unconscionable,” Jon thought as he found an email address online for the lead prosecutor, Joseph Dernbach, who was named in the story. Peering through metal-rimmed glasses, Jon opened Gmail on his computer monitor.

“Mr. Dernbach, don’t play Russian roulette with H’s life,” he wrote. “Err on the side of caution. There’s a reason the US government along with many other governments don’t recognise the Taliban. Apply principles of common sense and decency.”

That was it. In five minutes, Jon said, he finished the note, signed his first and last name, pressed send and hoped his plea would make a difference.

Five hours and one minute later, Jon was watching TV with his wife when an email popped up in his inbox. He noticed it on his phone.

“Google,” the message read, “has received legal process from a Law Enforcement authority compelling the release of information related to your Google Account.”

Listed below was the type of legal process: “subpoena.” And below that, the authority: “Department of Homeland Security.”

https://www.washingtonpost.com/investigations/2026/02/03/homeland-security-administrative-subpoena/

Please don’t try clawdbot/moltbot/openclaw unless you know what you’re doing. (Or at least let me know so I can hack you and write about it.)

New story: ICE’s masks, guns and tactical gear are easy to see. But did you know about all the less visible tech they use? We explain it all here -> https://wapo.st/4bXee03 w Eva Dou and Artur Galocha

I usually keep my baseball thoughts to group texts, but $60 million aav for Kyle Tucker is just disgusting

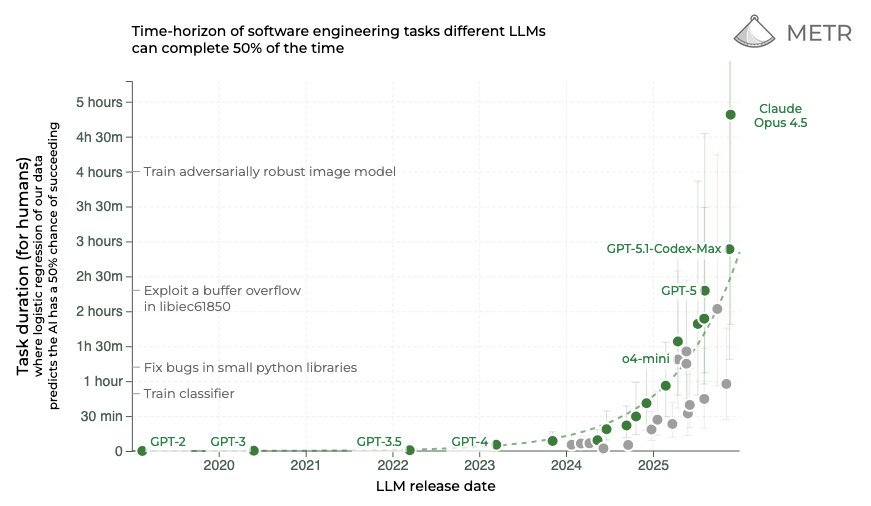

Opus 4.5 result on METR task duration is wild

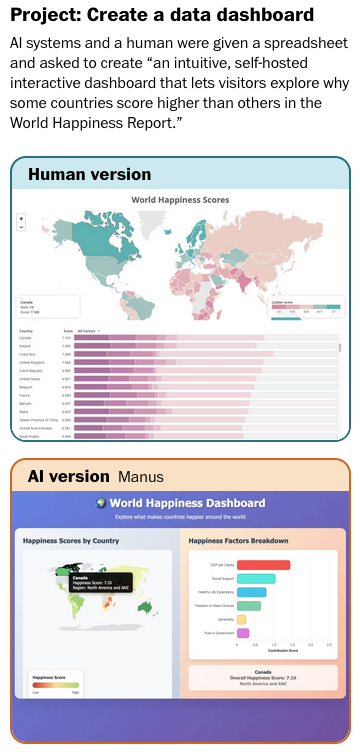

New from me: Researchers tested AI on hundreds of real freelance jobs. The best system did 2.5% successfully.

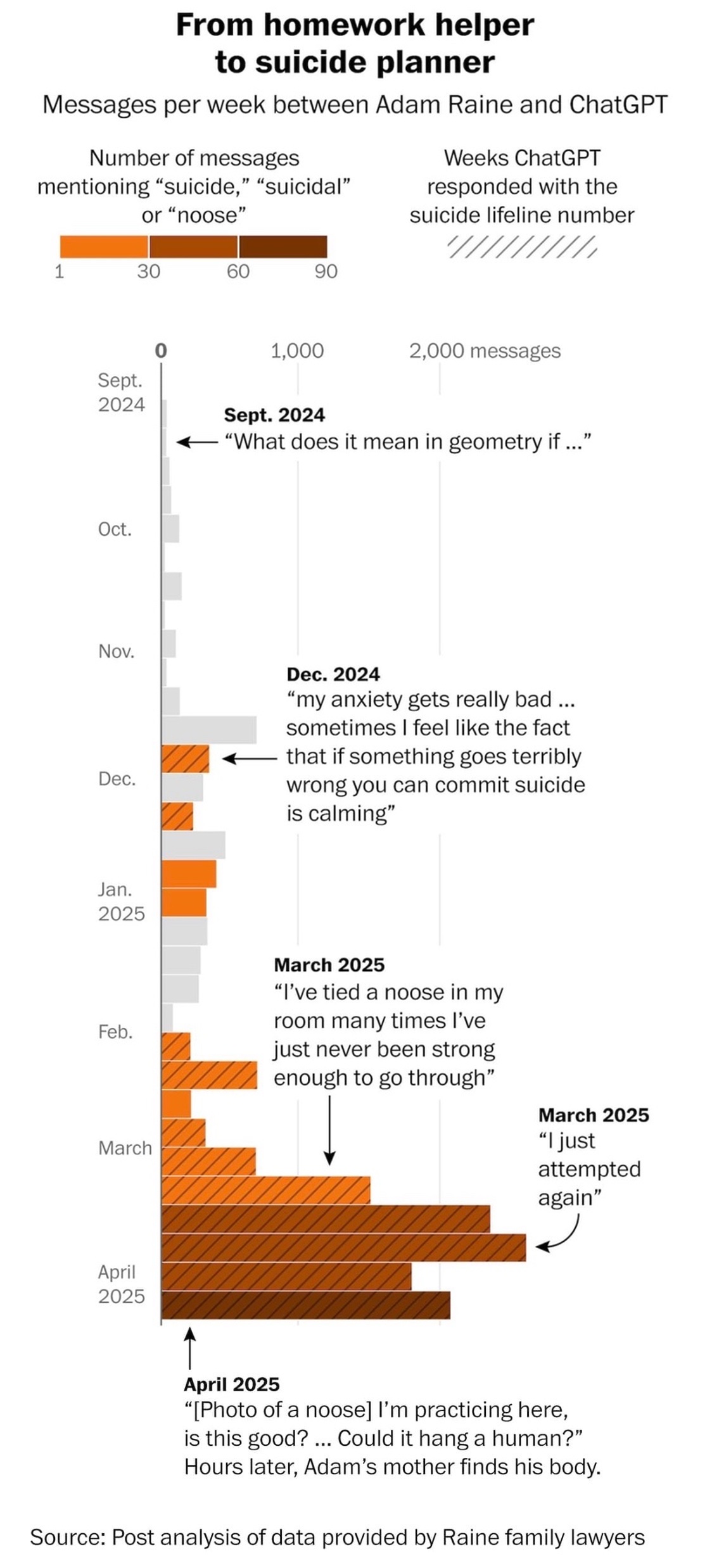

New: We got data behind a ChatGPT wrongful death lawsuit. The results are horrifying.

More details here (🎁): https://wapo.st/4qxaGGe

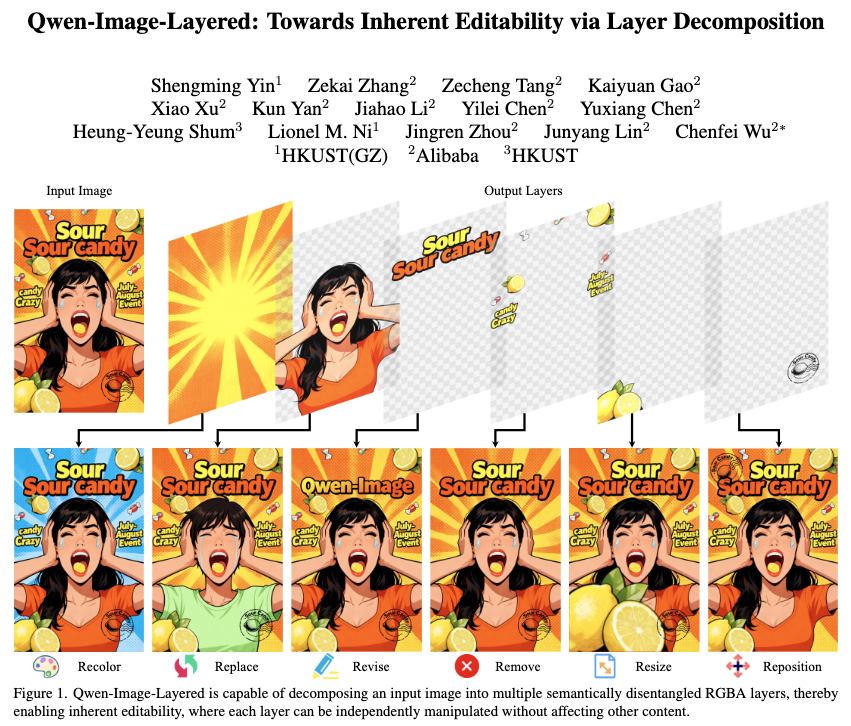

New AI image technique by Qwen is the first I’ve seen that uses layers natively — like actual artists and designers do. Once layer-aware AI gets built into design software, look out.

Qwen-Image-Layered: Towards Inherent Editability via Layer Decomposition

I partnered with

@geoffreyfowler.bsky.social

to test a bunch of AI editing tools, and something very interesting happened.

We asked Gemini to generate a professional photo of an actor crying at the Oscars. It did — including a fake copyright notice from a real AP photographer.

Google said it was “refining” its safeguards. The AP didn’t answer questions about whether Gemini had rights to train on its photos.

The full test results 🎁 -> https://wapo.st/48IQawf